TL;DR

Piloting AI audit tools requires structured planning, clear metrics, and disciplined execution. Define objectives, prepare data, manage timelines, and evaluate results before scaling; a focused pilot reduces risk while improving decision quality.

Why Piloting AI Audit Tools Matters

The auditing landscape is undergoing a rapid transformation, driven by the increasing adoption of Artificial Intelligence. For audit leaders, CFOs, and IT managers, carefully piloting AI audit tools is no longer optional - it's critical for competitive advantage and operational efficiency. This guide outlines best practices to ensure your organization successfully evaluates and integrates these powerful new solutions.

AI audit tools are software applications that leverage artificial intelligence, machine learning, and natural language processing to automate, enhance, and streamline various aspects of the audit process. These tools typically analyze large datasets, identify anomalies, automate routine tasks, and provide insights that human auditors might miss, enabling faster reviews, sharper insights, and seamless collaboration. For instance, AI adoption in audit functions rose from 8% to 21% in one year (2024-2025), with early adopters saving up to 8,000 hours annually and achieving 20-40% productivity gains according to AuditBoard’s 2025 Risk Intelligence Report.

Define Clear Objectives and Success Metrics Before You Start

Before initiating any pilot program, clearly defining your objectives and establishing measurable success metrics is paramount. This ensures the pilot is focused and provides actionable insights for a go/no-go decision. A 2025 MIT study highlighting a 95% failure rate for enterprise generative AI pilots underscores the importance of a structured approach.

Organizations must identify specific pain points that AI should address, such as inefficient evidence collection, inconsistent risk scoring, or lengthy review times. Establishing quantifiable Key Performance Indicators (KPIs) is essential for evaluating the pilot's effectiveness. The new IIA Standards, effective January 9, 2025, mandate formal KPI definition for internal audit performance. These KPIs should align with the broader audit transformation strategy, ensuring that the pilot contributes to long-term goals. For example, audit teams must shift to being "strategic, data-driven, and tightly aligned with risk," requiring real-time visibility per Empowered Systems.

a) Identify specific inefficiencies in current audit processes.

b) Establish quantifiable KPIs such as time savings, error reduction, and user adoption rates.

c) Align pilot goals with the organization's overarching audit transformation strategy.

d) Utilize balanced scorecards for internal audit performance measurement as recommended by the IIA.

Select the Right Pilot Scope and Team

Choosing the appropriate scope and assembling the right team are critical steps for a successful AI audit tool pilot. A representative but manageable audit engagement allows for effective testing without overwhelming resources. Mid-market companies often find success by focusing on narrow-scope pilots, adapting enterprise tools for high-value specific processes.

Identifying a balanced team that includes both early adopters and thoughtful skeptics provides comprehensive feedback. This approach leverages enthusiasm while also addressing potential challenges head-on. The optimal team size should be large enough to provide meaningful evaluation but small enough to manage efficiently. Big Four firms, for example, are already assessing specific client tools to identify issues like bias, highlighting the need for diverse perspectives in evaluation.

i. Select a manageable audit engagement that is representative of typical work.

ii. Include a mix of early adopters and skeptics to gather diverse feedback.

iii. Determine an optimal team size for effective evaluation and manageability.

iv. Consider cross-functional involvement from IT and finance for broader insights.

AI Audit Tool Pilot Approaches: Comparison Matrix

This table compares different pilot strategies organizations can use when testing AI-powered audit tools, helping leaders choose the right approach based on their organization's size, risk tolerance, and goals.

Prepare Your Data and Infrastructure

Effective data preparation and robust infrastructure are non-negotiable for AI audit tools to function effectively. The quality of your audit data directly impacts the accuracy and reliability of AI outputs. AI systems require high data quality, with accuracy rates over 98% and completeness rates of at least 95% according to Techment.

Integration with existing audit management systems is another critical consideration. Many enterprises face significant challenges integrating AI with legacy systems, with 60% of enterprises identifying legacy integration as a main blocker to scaling AI. Organizations must also establish clear security, compliance, and data governance protocols. 83% of organizations lack automated AI controls, exposing sensitive data to public AI tools per a 2025 Kiteworks study. This step is crucial for addressing data privacy and security concerns in AI audits.

a) Assess and clean audit data to meet AI tool requirements for accuracy and completeness.

b) Plan for seamless integration with current audit management systems, addressing any legacy system challenges.

c) Establish and enforce robust security, compliance, and data governance frameworks.

d) Implement automated cleansing, standardization, and validation for data quality as a best practice.

Design a Structured Pilot Timeline and Milestones

A well-defined timeline with clear milestones is essential for managing expectations and maintaining momentum during an AI audit tool pilot. While there's no single "optimal duration," minimizing time in pilots is recommended to avoid the 95% failure rate associated with prolonged experimentation. Many successful pilots typically range from 30 to 90 days, balancing adequate testing with timely decision-making.

The timeline should include weekly check-ins, mid-pilot assessments, and final evaluation gates. This phased approach allows for continuous learning and adjustments. Large enterprises, for instance, take an average of nine months to scale pilots, compared to 90 days for mid-market firms, highlighting the need for efficient progression. Big Four firms are already advancing towards end-to-end AI audit automation by 2026, demonstrating the need for swift, decisive action.

i. Establish a pilot duration, typically 30-90 days, with defined start and end dates.

ii. Schedule regular weekly check-ins to monitor progress and address immediate issues.

iii. Conduct mid-pilot assessments to review initial findings and make necessary adjustments.

iv. Define clear evaluation gates for moving to the next phase or making a go/no-go decision.

Train Users and Gather Continuous Feedback

Effective user training and continuous feedback loops are paramount for successful AI audit tool adoption. Audit teams have varying levels of tech comfort, so onboarding approaches must be flexible and comprehensive. 45% of auditors see AI training as the top driver for adoption, with 84% prioritizing AI skills in recruiting per a Wolters Kluwer survey.

Creating feedback mechanisms, such as surveys, interviews, and usage analytics, helps identify pain points and opportunities for improvement. Addressing resistance proactively and building champions within the team fosters a positive environment for change. This is crucial for effectively transitioning to AI-augmented audits and avoiding the "middle maturity trap" where inconsistent governance undermines AI's full promise according to AuditBoard.

- Provide comprehensive training tailored to different tech comfort levels within the team.

- Implement structured feedback loops using surveys, interviews, and usage data.

- Actively address user resistance through open communication and demonstrating value.

- Identify and empower team members who can become internal champions for the new tools.

Evaluate Results and Make the Go/No-Go Decision

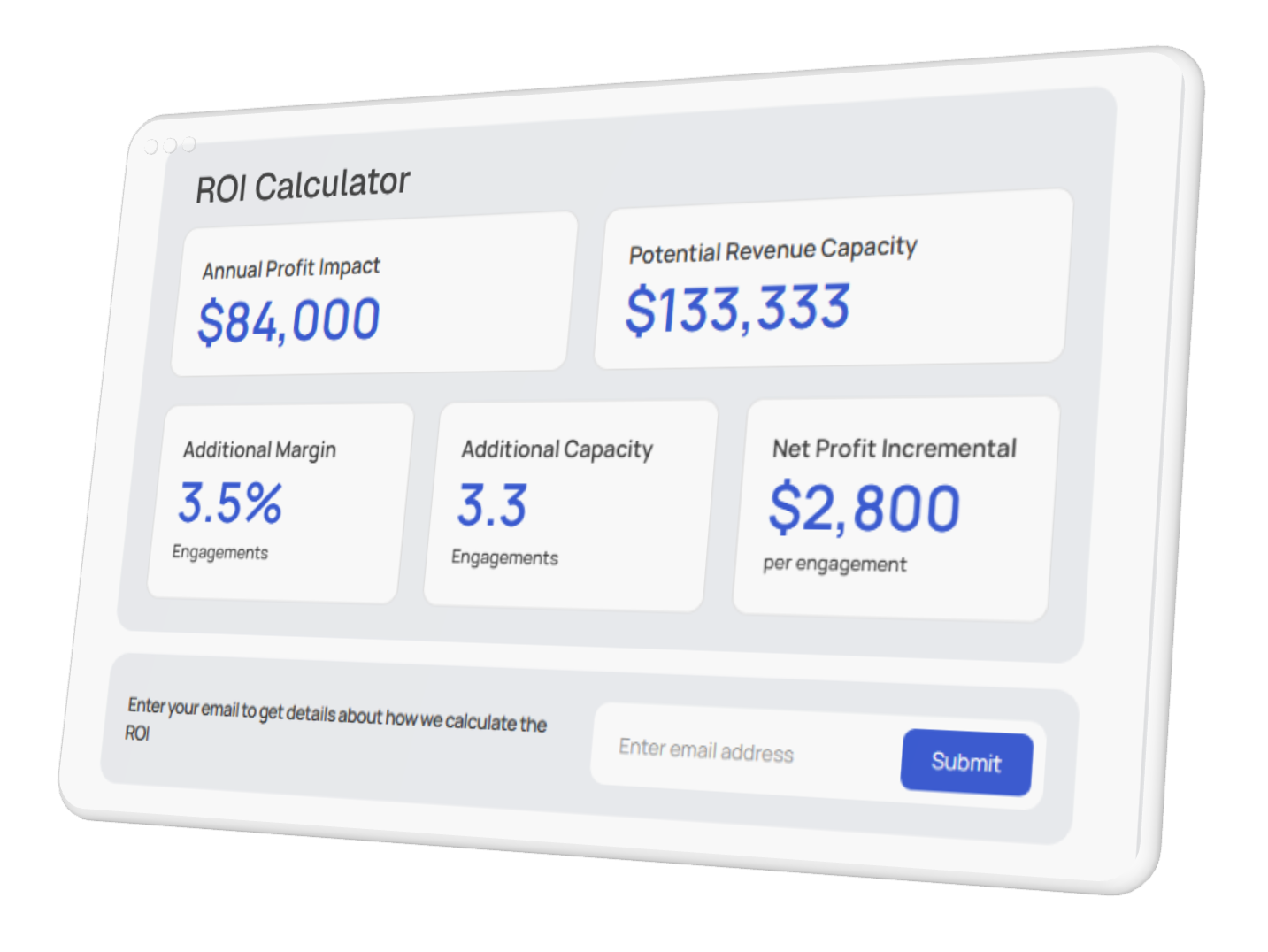

The ultimate goal of a pilot program is to inform a strategic go/no-go decision regarding full-scale implementation. This requires analyzing pilot data against your predefined success metrics. For example, a Stanford/MIT study found that generative AI users reallocated 8.5% of time (about 3.5 hours per 40-hour week) from routine tasks and closed month-end books 7.5 days faster.

A thorough cost-benefit analysis, including ROI calculations, is vital. Forrester’s Total Economic Impact study reported WRITER customers achieved 333% ROI and $12.02 million NPV over three years, demonstrating the potential. When making the decision, consider whether to expand the AI tool's use, pivot to a different solution, or walk away if the benefits do not outweigh the costs and risks. A strategic implementation guide for transforming your audit practice with AI can provide further insights.

- Compare pilot outcomes directly against predefined success metrics and KPIs.

- Conduct a detailed cost-benefit and ROI analysis, quantifying tangible and intangible benefits.

- Assess user feedback and adoption rates to gauge team readiness and satisfaction.

- Make an informed go/no-go decision: expand, pivot, or discontinue the solution.

Key Takeaways

- Clear objectives and measurable KPIs are foundational for a successful AI audit tool pilot.

- Scope and team: Select a manageable scope and a balanced team for relevant, actionable feedback.

- Data and integration: High-quality data and seamless integration are critical for AI tool performance.

- Timelines and feedback: Structured timelines and continuous feedback loops are essential.

- Evaluation: Thorough comparison to predefined metrics and robust ROI analysis drive go/no-go decisions.

- Governance and skills: Address governance gaps and invest in skill development for successful adoption.

Conclusion: From Pilot to Production

Piloting AI audit tools is a strategic imperative for audit functions seeking to enhance efficiency, accuracy, and insight. By following best practices - from defining clear objectives and preparing data to training users and rigorously evaluating results - organizations can navigate the complexities of AI adoption. This structured approach helps mitigate risks and maximizes the potential for transformative benefits.

Finspectors supports structured pilot programs with audit teams, providing the tools and expertise needed for faster reviews, sharper insights, and seamless collaboration. Our platform helps audit teams streamline evidence collection, automate risk scoring, and accelerate financial reviews, allowing auditors to focus on judgment rather than grunt work. As AI continues to evolve, establishing strong governance and documentation are crucial for AI-powered audit tools to ensure long-term success and regulatory compliance. The next step for forward-thinking organizations is to scale AI adoption across their audit function, turning pilot successes into widespread operational advantages.