TL;DR: What You Need to Know in 60 Seconds

The Problem: Manual audits can't scale with modern data volumes. Sample-based testing misses critical risks hiding in billions of transactions.

The Solution: AI platforms analyze 100% of transactions in real-time, detecting anomalies humans would never find and predicting risks before they materialize.

The Results:

a) 50% faster audit cycles

b) 100% data coverage vs. 1-5% sampling

c) Proactive risk prevention vs. reactive problem-finding

d) 27.9% market growth through 2033 signals this is becoming essential infrastructure

The Action: Start with a focused pilot in one high-value audit area. Organizations that implement AI today gain compounding advantages as their systems learn and improve over time.

The Bottom Line: This isn't about automating existing processes - it's about doing things that were previously impossible. The audit function is transforming from periodic compliance checking to continuous intelligent assurance.

Why it matters

i. 100% data coverage vs. sample-based assumptions

ii. Real-time anomaly detection - catch issues as they happen

iii. Predictive insights - spot emerging risk, not just past mistakes

iv. Market momentum: rapid investment and adoption - AI audit tech is now core infrastructure

What AI actually does

a) Automated ingestion: OCR + NLP turn documents into usable data

- Adaptive anomaly detection: ML learns “normal” and flags deviations

c) Continuous monitoring: transaction-by-transaction checks, not snapshots

d) Predictive analytics: forecasts risk trends (liquidity, fraud, compliance)

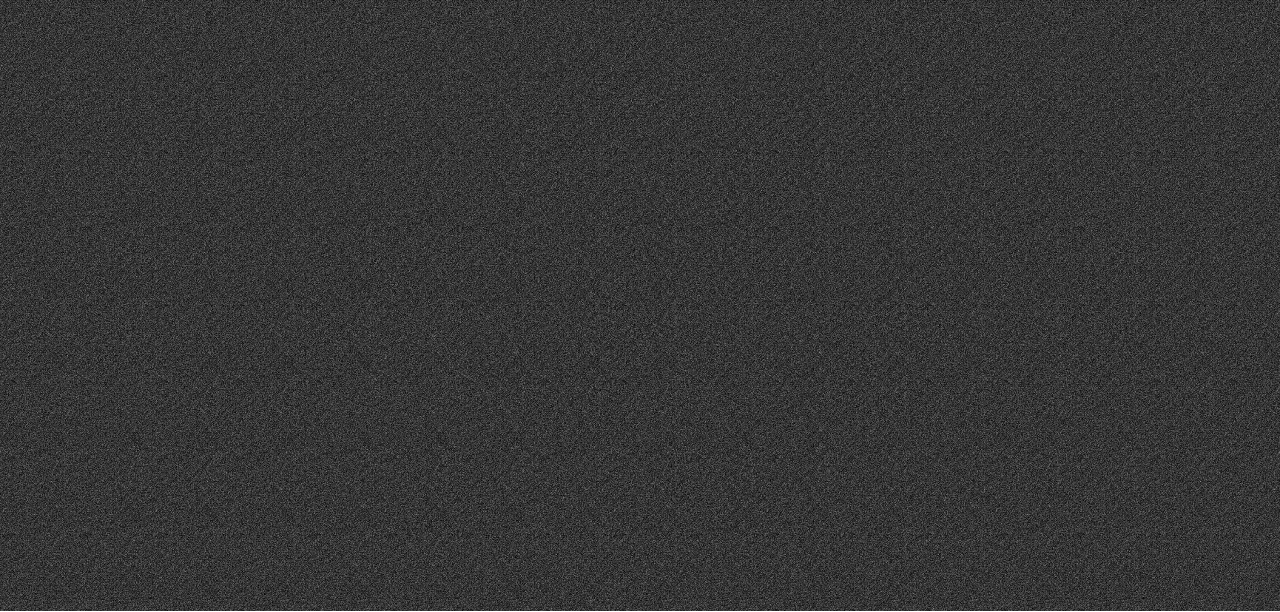

Proven payoff

Crowe MacKay LLP: fewer manual samples, cross-correlation of many risk factors, and detection of anomalies hidden from conventional tests - allowing auditors to focus on true risk.

Rapid 6-step pilot roadmap

- Pick one high-volume process (payables, expenses, revenue)

- Set a measurable objective (e.g., 100% coverage or reduce fraud detection time 40%)

- Audit your data readiness - completeness, format, accessibility

- Run a 6 - 8 week pilot with clear success metrics

- Train auditors to interpret model outputs (not just dashboards)

- Govern & iterate - bias checks, explainability docs, monitoring cadence

Common blockers & quick fixes

Poor data quality → Start small; cleanse pilot dataset first.

“Black box” concerns → Use interpretable models and add model-logic notes to findings.

Resistance to change → Show fast wins; pair AI outputs with auditor review.

Legacy systems → Use connectors or export layers for pilot scope.

Near-future trends that matter

Generative AI will draft audit summaries and evidence narratives.

AI + Blockchain provides tamper-evident trails + analytics.

Audit-as-a-Service: subscription-based continuous monitoring.

Hyper automation: AI + RPA for end-to-end audit workflows.

Why start now

AI audit platforms turn assurance from hindsight into foresight. A focused pilot produces measurable wins quickly, builds confidence, and creates the playbook to scale. Start small, measure fast, scale smart.