TL;DR

Summaries help, but risk signals move audit decisions. Fuse narratives + numbers to surface risk earlier, keep a human in the loop (AI drafts; auditors decide), and document clearly and reproducibly to satisfy inspectors. Use generative AI to join text and ledger data, turn anomalies into calibrated signals, triage with human judgment, design scenario-based tests, and produce inspector-ready documentation.

1) Fuse narratives with numbers to spot risk early

Most risk hides where what’s written meets what’s posted. Align GL narration, invoice text, emails, and notes with entries, vendors, timing, and users to reveal clusters - e.g., duplicate intent with different descriptions, or period-end postings sharing similar language. The output isn’t prose; it’s ranked leads for scoping.

2) Turn anomalies into calibrated risk signals

An outlier alone is noise. Tie it to materiality and assertions so it becomes a calibrated signal. Set thresholds, weight patterns that matter (e.g., off-hours postings, one user dominating close journals), and reduce alert noise so teams act on fewer, sharper prompts.

3) Triage exceptions with a human in the loop

Let AI draft reason codes, likely root causes, and next steps; auditors accept or edit, and that becomes the recorded judgment. Link every decision to the evidence, the model version, and the assertion at stake. AI plays workflow assistant, not black box - and you get a clean chain of review.

4) Aim testing with scenario intelligence

Ask what-ifs: if a vendor’s risk ticks up, or period-end clusters shift earlier by a day, what changes? Use answers to resize samples, add targeted cut-off tests, or expand related-party checks - designing sharper procedures without bloating the plan.

5) Document explainably so inspectors stay comfortable

Draft workpaper snippets that explain what the system did, why the score was assigned, and which assertion the step supports - including inputs, transformations, limits, and the reviewer’s conclusion. Inspectors care less about the algorithm’s brand and more about a clear, reproducible trail.

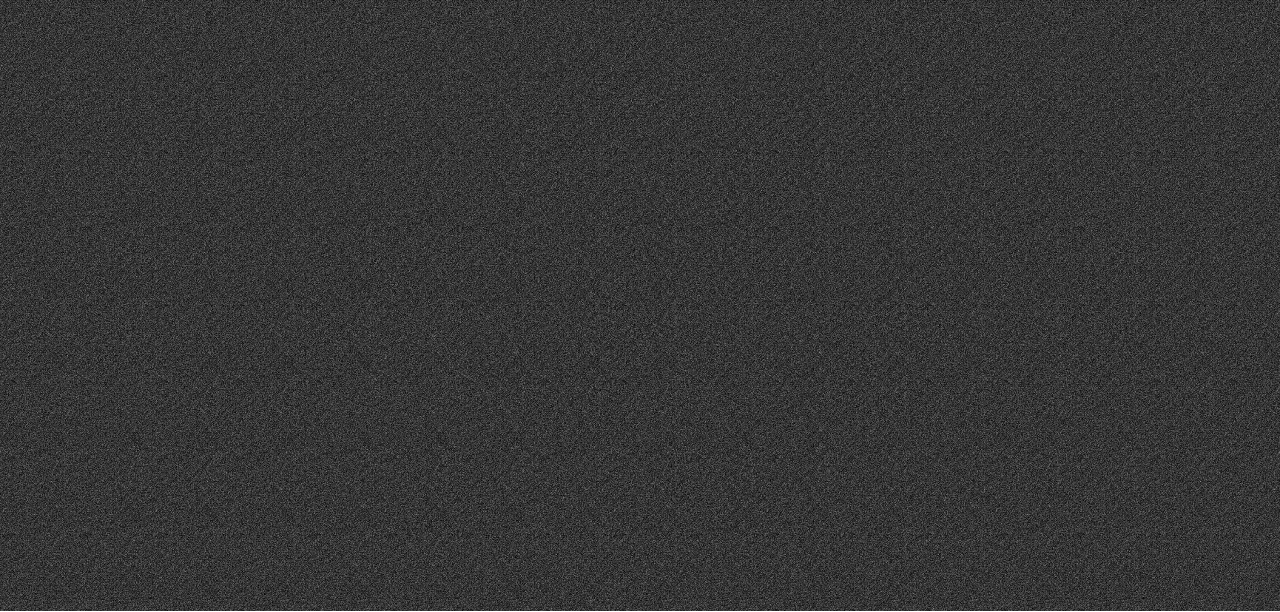

What “good” looks like (targets to track)

- Review time per 1k rows: 25-40% faster (goal, not guarantee)

- First-pass closure rate: +15-30%

- Rework rate: 20-30%

- Trail completeness: Decisions linked to evidence, versions, and assertions.

Summaries vs signals (why this matters)

- Key point: What you can do next

Narrative recap

Read more; limited actionability

Calibrated, ranked leads

Change scope, resize samples, add tests, and document why