How to Choose the Right Audit Analytics Platform for Your Firm

The market for audit analytics and automation is crowded. The right platform is not the one with the most features - it is the one that fits your audits, your data, and your team. Here is a structured way to evaluate options.

1. Define Your Use Cases and Priorities

- Key point: Before comparing vendors, be clear on what you need the platform to do first.

- Core use cases: Common starting points include: (1) risk scoring and prioritization (e.g., transaction or account risk), (2) evidence collection and organization, (3) anomaly detection and testing, (4) workpaper and narrative support, (5) reporting and committee dashboards. Rank which matter most in the next 12 - 24 months.

- Audit mix: Consider whether you need strength in financial statement audits, internal audit, compliance, or a mix. Some platforms are built for statutory audit workflows; others are broader GRC tools.

- Scale: Estimate data volumes (e.g., transaction counts, entities, years) and number of concurrent users. This will drive requirements for performance, connectors, and licensing.

- Output: List the must-have outputs (e.g., risk reports, exception lists, workpaper exports, committee summaries) so you can check whether each vendor can deliver them.

2. Assess Data Connectivity and Ingestion

- Key point: Analytics are only as good as the data that feeds them. Evaluate how easily the platform can get your data.

- Connectors and APIs: Does the platform connect to your main data sources (ERP, GL, bank feeds, HR, etc.) via native connectors or well-documented APIs? How much custom integration is required?

- Formats and volume: What file formats and sizes are supported (e.g., CSV, Excel, database exports)? Are there limits that would block you at scale?

- Refresh and latency: Can data be refreshed on a schedule or on demand? Is near real-time or daily/weekly refresh sufficient for your use cases?

- Data quality and mapping: How does the platform handle mapping (e.g., chart of accounts, entity IDs) and data quality issues? Transparent mapping and clear error handling reduce implementation risk.

3. Understand Risk and Analytics Logic

Risk scoring and anomaly detection must be understandable and defensible to auditors and regulators.

- Explainability: Can the platform explain why a transaction or account received a given risk score or was flagged? Explainable risk scoring is increasingly expected.

- Configurability: Can you adjust rules, thresholds, or models to align with your audit approach and risk appetite, or is everything a black box?

- Methodology and documentation: Does the vendor provide clear documentation of methodologies (e.g., statistical tests, rules, ML approach) so you can describe them in workpapers and to oversight?

- Benchmarks and validation: Where relevant, can you validate results (e.g., known exceptions, prior-year findings) to build confidence before rollout?

4. Check Workflow and Integration Fit

- Key point: The platform should slot into how your team already works, not force a complete redesign.

- Evidence and workpapers: Does it support evidence collection, linking evidence to tests or controls, and exporting or syncing to your workpaper tool?

- Existing tools: Can it integrate with your current audit management, GRC, or ERP tools (e.g., single sign-on, API, or export/import)?

- User experience: Is the interface intuitive for auditors (not only data scientists)? Can reviewers and partners use it without extensive training?

- Deployment: Is it cloud-only, on-premise, or hybrid? Does that match your security and compliance requirements?

5. Evaluate Vendor Support, Roadmap, and Total Cost

- Key point: Technology choices are long-term; vendor stability and direction matter.

- Support and onboarding: What does implementation and onboarding look like? Is there dedicated support, training, and documentation? Are there user communities or success stories from firms like yours?

- Roadmap: What is on the vendor roadmap (e.g., new connectors, analytics, or reporting)? Does it align with where you want to be in two to three years?

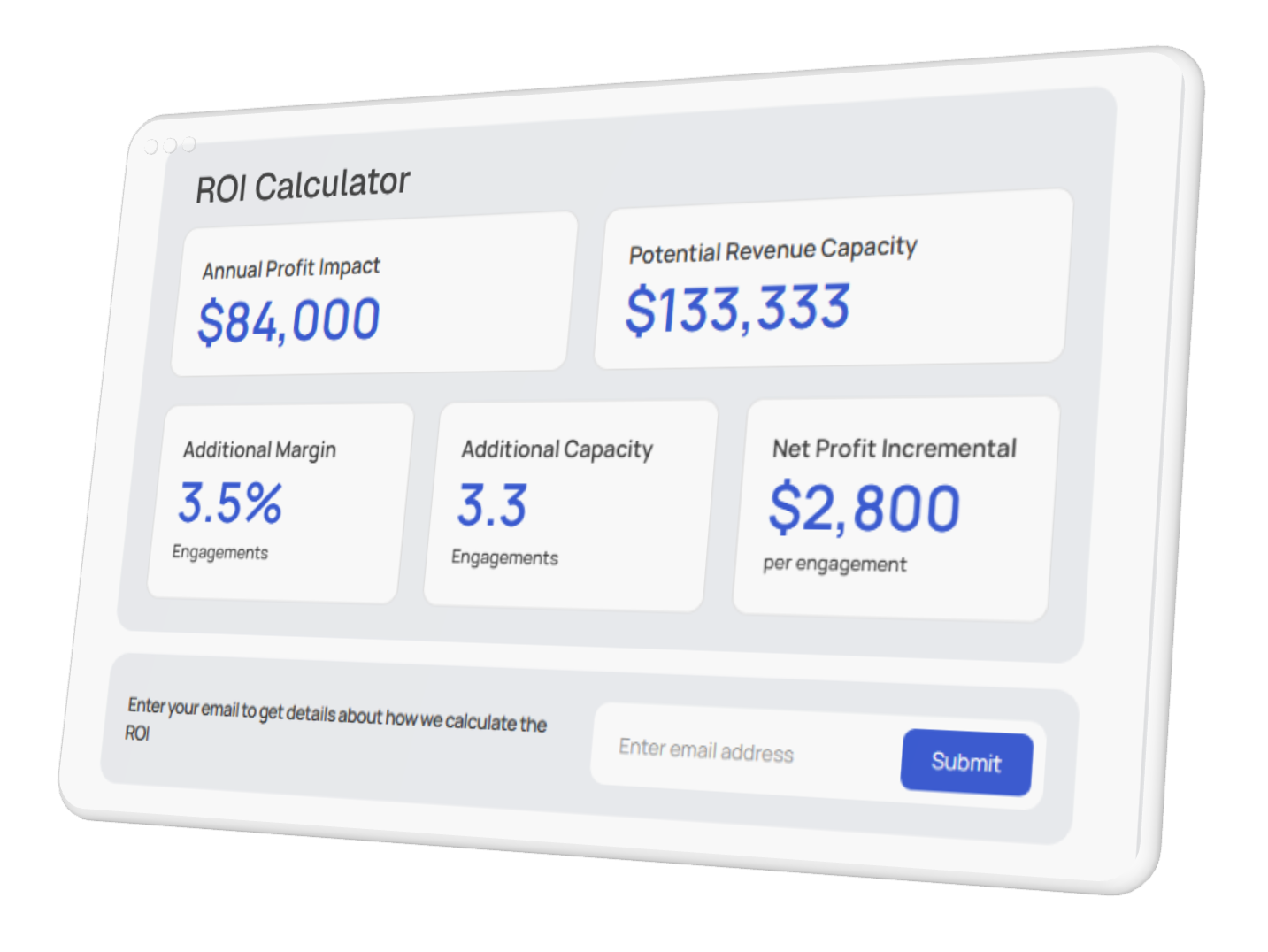

- Total cost: Consider not only license fees but implementation, integration, training, and ongoing support. Compare pricing models (per user, per engagement, per volume) against your expected usage.

- References: Talk to at least one or two existing customers in a similar profile (size, audit type) to validate performance, support, and fit.

Shortlist and Pilot

Use the criteria above to shortlist two or three platforms. Then run a pilot on one engagement or one risk area: load real data, run risk scoring or evidence collection, and produce a sample output. Validate accuracy, explainability, and usability with the team. That hands-on experience will confirm whether the platform is the right fit before a firm-wide commitment.