TL;DR

The most effective way to measure and explain audit risk with AI involves transitioning from qualitative judgment to quantitative, data-driven assessment, analyzing 100% of transactions for dynamic risk scoring. This is achieved through real-time analytics, continuous monitoring, and enhancing explainability using "glass box" AI frameworks and visualization tools to translate complex algorithmic logic into understandable business language for stakeholders and regulators, as highlighted by the EU AI Act and NIST AI RMF. Platforms like Finspectors demonstrate this capability.

The Shift to Quantitative Risk Assessment

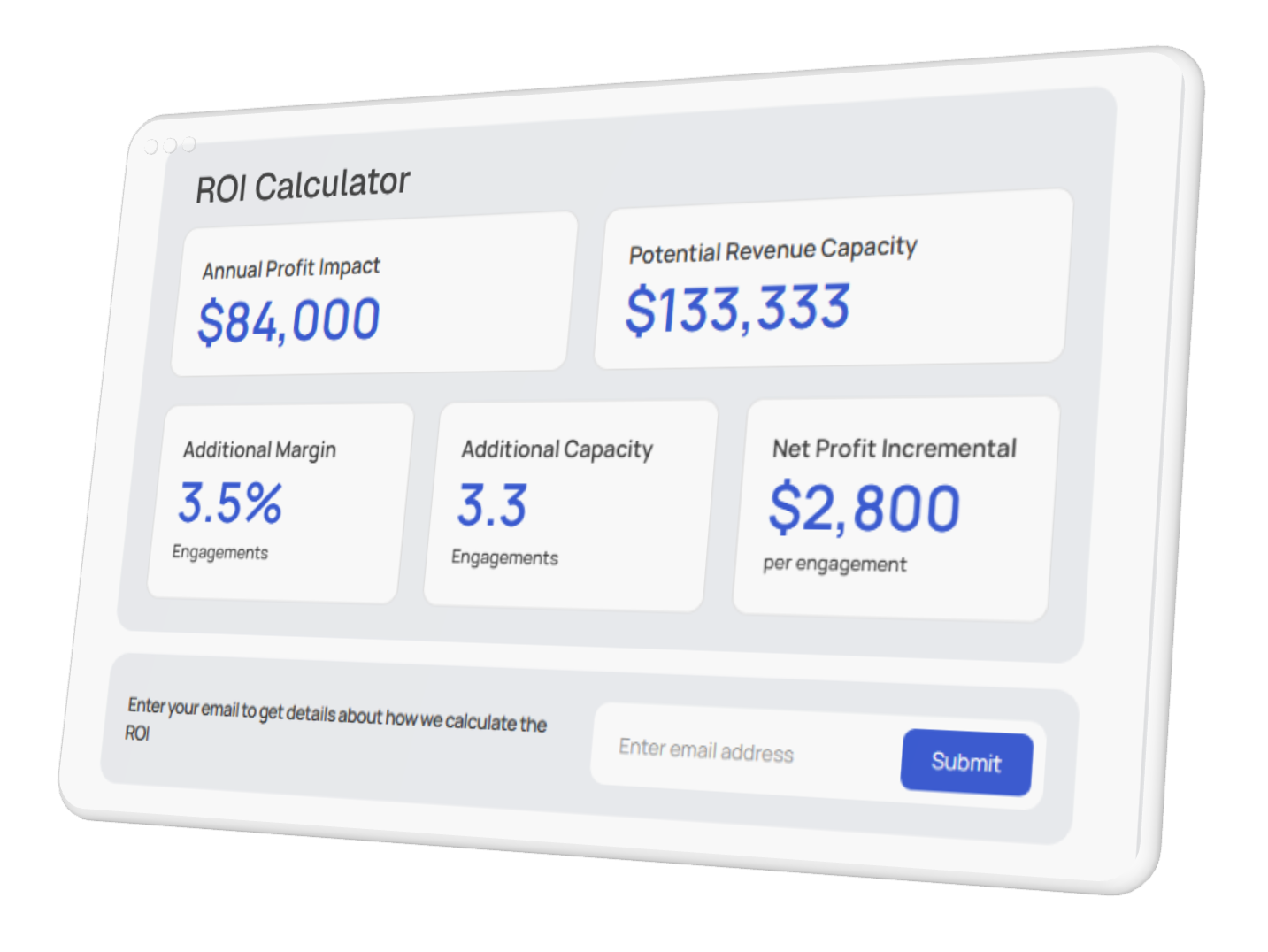

The most effective way to measure audit risk today involves a fundamental transition from qualitative judgment to quantitative, data-driven assessment. Historically, auditors relied on sampling methods and subjective "high, medium, low" categorizations to gauge risk. However, modern AI technology allows for the analysis of 100% of transactions, enabling firms to assign specific, dynamic risk scores to every entry in a ledger. This shift ensures that risk measurement is based on empirical evidence rather than intuition. Solutions like Finspectors leverage this capability to streamline audit processes.

According to a 2024 KPMG global survey, 60% of organizations now use AI for trend, impact, and risk predictions in financial reporting. This adoption allows auditors to move beyond static checklists. By utilizing machine learning algorithms, audit teams can identify subtle patterns and correlations that human analysis might miss, resulting in a more precise calculation of material misstatement risk. You can understand the implementation of risk scoring engines with tiered profiles to see how these quantitative models categorize risk levels with granular precision.

The market reflects this urgent demand for better measurement tools. Research indicates that the AI in Audit Market is projected to grow at a CAGR of 27.9% from 2024 to 2033. This growth is driven by the necessity to handle increasing data volumes and the complexity of modern financial systems. Quantitative assessment provides a defensible, mathematical basis for audit opinions, which is crucial when explaining risk methodologies to stakeholders and regulators. Platforms like Finspectors exemplify this transformation.

Key Benefits of Quantitative AI Assessment

- Full Population Testing: Unlike manual sampling, AI analyzes entire datasets, ensuring no outlier or anomaly is overlooked due to sample selection bias.

- Dynamic Risk Scoring: Risk scores are updated in real-time as new data enters the system, rather than being a static snapshot taken once a year.

- Reduction of False Positives: Advanced algorithms learn from historical data to distinguish between genuine risks and benign anomalies, streamlining the review process.

- Pattern Recognition: AI identifies complex, non-linear relationships between variables that suggest fraud or error, which traditional linear analysis often misses.

- Objective Documentation: Every risk flag creates a digital audit trail, providing clear evidence for why a specific area was scrutinized.

Real-Time Analytics and Continuous Monitoring

Measuring audit risk effectively requires speed. Traditional audits often look at data that is months old, making the "measurement" of risk a historical exercise rather than a proactive one.AI technology facilitates continuous auditing, where data is ingested and analyzed in real-time. This capability transforms risk measurement from a periodic check-up into a constant health monitor. By detecting issues as they occur, auditors can measure the velocity and impact of risks immediately.

A report by KPMG highlights that 56% of firms leverage AI for real-time risk, fraud, and weakness insights. This capability is essential for modern governance, as it allows organizations to pivot quickly in response to emerging threats. For example, if a procurement system suddenly shows a spike in unauthorized vendor additions, AI tools can flag this instantly, measuring the potential financial exposure before it compounds.

To effectively implement real-time measurement, organizations must integrate their ERP systems directly with AI auditing platforms. This integration allows for the seamless flow of data and the application of continuous control monitoring (CCM). You can discover the role of anomaly detection in identifying audit risks to see how these real-time systems function to catch irregularities the moment they happen.

Steps to Implement Continuous Risk Measurement

a) Data Integration: Connect AI auditing tools directly to financial systems (ERP, CRM, Payroll) via APIs to ensure a steady stream of live data.

b) Define Risk Parameters: Establish baseline "normal" behavior for all transaction types using historical data to train the machine learning models.

c) Configure Alert Thresholds: Set specific thresholds for risk scores that trigger immediate notifications to the audit team or management.

d) Automate Validation: Use robotic process automation (RPA) to perform initial validation checks on flagged items, reducing the manual burden on human auditors.

e) Continuous Refinement: Regularly review the AI's output and provide feedback to the model (human-in-the-loop) to improve accuracy and reduce noise over time.

Enhancing Explainability and Transparency

Measuring risk is only half the battle; explaining it to the audit committee, board of directors, and regulators is equally critical. One of the primary challenges with AI technology is the "black box" problem, where the rationale behind an AI decision is opaque. The most effective way to explain audit risk with AI is to utilize "glass box" or explainable AI (XAI) frameworks. These tools provide the "why" behind a risk score, translating complex algorithmic logic into understandable business language.

Regulatory pressure is driving this need for transparency. The EU AI Act and NIST AI RMF-regulation-risk-roi) now require documented, auditable risk controls, including logging and traceability. When an auditor presents a high-risk finding to a client, they must be able to point to the specific factors-such as timing, user behavior, or transaction size-that contributed to that assessment. Explainability builds trust and allows management to take targeted corrective action.

Visualization plays a key role in explanation. Instead of presenting raw data tables, AI tools generate dynamic dashboards that visualize risk heat maps, trend lines, and cluster analyses. This visual evidence makes it significantly easier to communicate complex risk landscapes to non-technical stakeholders. By bridging the gap between data science and business strategy, auditors can turn risk measurement into strategic value.

Core Strategies for AI Risk Measurement

To effectively measure audit risk, firms must adopt specific strategies that leverage the full capabilities of AI technology. One core strategy is the use of multidimensional risk scoring. Rather than looking at a transaction in isolation, AI evaluates it across multiple dimensions-who posted it, when it was posted, the approval chain, and how it compares to similar historical transactions. This holistic view provides a more accurate measurement of inherent risk.

Another vital strategy is the integration of Generative AI for qualitative analysis. While traditional AI excels at numbers, Generative AI can analyze unstructured data such as contracts, board minutes, and email correspondence to identify risks related to compliance or sentiment. You can explore how generative AI enhances audit risk intelligence by processing vast amounts of text to find hidden liabilities or control weaknesses that structured data analysis might miss.

Leading firms are also utilizing "champion/challenger" models. In this approach, multiple algorithms run simultaneously to assess the same dataset. The results are compared to see which model most accurately predicts known risks. This competitive modeling ensures that the measurement tools remain sharp and effective. As noted by MindBridge, companies like Align Technologies have used these platforms to analyze billions of transactions, uncovering discrepancies missed by manual reviews.

Effective Risk Measurement Methodologies

i. Unsupervised Learning for Outliers: Algorithms cluster transactions based on similarity and flag those that fall far outside established clusters as potential risks.

ii. Supervised Learning for Fraud: Models trained on known fraud cases identify new transactions that share characteristics with confirmed fraudulent activities.

iii. Natural Language Processing (NLP): AI scans general ledger descriptions and contract terms to identify risky keywords or unusual phrasing.

iv. Network Analysis: Visualizing relationships between entities (vendors, employees, customers) to detect conflicts of interest or circular trading schemes.

v. Predictive Modeling: Using historical trends to forecast future risk areas, allowing auditors to allocate resources proactively.

Integrating AI with Governance Frameworks

Measuring and explaining risk is not an isolated technical task; it must be integrated into the broader corporate governance framework. Boards and audit committees are increasingly demanding visibility into how AI is being used to monitor the organization. Effective explanation involves demonstrating how AI tools align with internal controls and external regulations. This alignment ensures that the "black box" of AI does not become a liability itself.

Recent data from Harvard Law School reveals that 48% of companies now specifically cite AI risk as part of board-level risk oversight, a significant increase from previous years. Furthermore, 36% of companies disclose AI as a separate risk factor in their 10-K filings. This trend underscores the necessity for auditors to provide clear, jargon-free explanations of AI findings that can be understood by board members who may not have technical backgrounds.

To achieve this, auditors should map AI risk findings directly to the COSO framework or other relevant control standards. By showing how a specific AI-detected anomaly relates to a control objective (e.g., completeness, accuracy, validity), the explanation becomes relevant to the governance structure. This context is essential for explaining the significance of the measured risk.

Governance Integration Checklist

- Policy Alignment: Ensure AI risk parameters match the organization's risk appetite statement and internal control policies.

- Oversight Reporting: Create standardized reports for the audit committee that summarize AI-driven insights, focusing on high-risk areas and systemic trends.

- Model Validation: Regularly audit the AI models themselves to ensure they are functioning as intended and not introducing bias (Model Risk Management).

- Human-in-the-Loop: Mandate human review for all high-risk flags to validate the AI's measurement before it is reported to governance bodies.

- Regulatory Mapping: Explicitly link AI findings to specific regulatory requirements (SOX, GDPR, etc.) to demonstrate compliance implications.

Practical Applications and Case Studies

The theory of measuring and explaining risk with AI is best understood through practical application. In the field, these tools are transforming how audits are conducted. For instance, instead of spending weeks vouching invoices, auditors now use AI to instantly verify three-way matches across thousands of purchase orders. The "measurement" of risk shifts from "did we find an error in the sample?" to "what is the error rate across the entire population?"

A compelling example comes from Mercadien, an accounting and advisory firm. By adopting AI-powered risk identification, they reported that the process became significantly more accurate and efficient. Stephen Noon, Managing Director, noted that the technology provided a better opportunity to "think through the risk areas, document them, and have a link back to our audit programs." This illustrates the power of AI to not just find risk, but to explain its context within the audit plan.

Similarly,Dawgen Global applied an AI Audit Methodology to assess credit decisioning and fraud analytics. The result was improved fairness metrics and enhanced explainability for customer-facing decisions. By measuring risk through an AI lens, they were able to achieve regulatory readiness certification, proving that the measurement was robust enough to satisfy external authorities.

To gain a deeper understanding of audit risk in today's evolving landscape, professionals must look at these real-world implementations. They demonstrate that effective measurement requires a combination of advanced technology and a methodology that prioritizes clarity and context.

Future Trends in AI Audit Technology

As we look toward the future, the way we measure and explain audit risk will continue to evolve with advancements in AI technology. One emerging trend is the move toward "predictive auditing." Rather than just assessing current risk, AI models will forecast future control failures based on operational data patterns. This shifts the explanation from "what went wrong" to "what is likely to go wrong," allowing for preventative risk management.

Another significant trend is the democratization of AI tools. As noted in PwC's 2025 AI Business Predictions, we can expect agentic AI-systems that can autonomously execute complex tasks-to play a larger role. In an audit context, this could mean AI agents that autonomously navigate a client's system to gather evidence, measure risk, and draft the initial explanation for the auditor's review.

Finally, the integration of blockchain with AI will enhance the immutability of the audit trail. As AI measures risk across blockchain ledgers, the explanation of that risk will be supported by cryptographic proof, offering the highest level of assurance. Auditors must stay ahead of these trends to ensure their risk measurement methodologies remain effective and relevant.

Emerging Technologies in Risk Measurement

a) Agentic AI: Autonomous software agents that perform end-to-end testing procedures and draft risk memos.

b) Multimodal Analysis: AI that combines video, audio, text, and numerical data to assess risk (e.g., analyzing inventory drone footage combined with ledger data).

c) Federated Learning: Allowing AI models to learn from data across different organizations without sharing the actual sensitive data, improving industry-wide risk benchmarks.

d) Continuous Assurance Platforms: Ecosystems where the auditor, client, and AI operate in a shared, always-on environment for instant risk transparency.

e) Automated ESG Auditing: Using AI to measure and explain risks related to environmental, social, and governance factors, which are becoming critical for reporting.

Conclusion

The most effective way to measure and explain audit risk with AI lies in the combination of quantitative precision and transparent communication. By moving away from manual sampling and embracing full-population analysis, auditors can measure risk with unprecedented accuracy.AI technology enables real-time monitoring, dynamic scoring, and the detection of complex patterns that traditional methods simply cannot see. However, measurement is only effective if it can be explained.

To bridge the gap between data science and decision-making, auditors must utilize explainable AI frameworks and advanced visualization tools. These technologies transform raw risk scores into a compelling narrative that stakeholders can understand and act upon. As regulatory scrutiny increases and business environments become more complex, the ability to leverage AI for both robust measurement and clear explanation will define the future of the audit profession. Organizations that adopt these strategies today will not only ensure compliance but also uncover strategic insights that drive business value.