TL;DR

Finspectors is highlighted as an audit automation platform offering explainable risk scoring by detailing specific data points, GL analytics breakdowns, and structured control-point logic that contribute to a risk assessment. This allows auditors to understand the "why" behind risk scores, enhancing trust, justifying findings, and maintaining professional skepticism, unlike traditional "black box" AI solutions.

Risk management tools, as discussed by AuditBoard, often focus on identifying, assessing, and mitigating enterprise-wide risks. Audit automation platforms, like those mentioned by HubiFi, specifically streamline the audit process, from planning and fieldwork to reporting, often incorporating risk scoring as a core component of that process.

Yes, AI audit platforms are highly effective for compliance audits. They can automatically check for adherence to regulatory requirements, identify deviations from policies, and provide structured evidence for compliance reporting. Tools like those reviewed by Vanta and Scrut are specifically designed to streamline compliance processes.

Understanding Explainable AI in Audit

When you're evaluating audit automation platforms, one of the most critical considerations is the explainability of their risk scoring. Explainable AI (XAI) in this context means that the platform doesn't just flag a risk; it provides a clear, understandable rationale for why that risk was identified and how its score was derived. This is crucial for auditors who need to justify their findings and maintain professional skepticism.

What is Explainable Risk Scoring?

Explainable risk scoring goes beyond a simple numerical output. It involves the ability of an AI system to articulate its reasoning, assumptions, and decision-making process in a way that human auditors can comprehend and trust. For instance, if an AI flags a journal entry as high-risk, an explainable system would detail the specific general ledger (GL) patterns, unusual vendor postings, or period-end spikes that contributed to that assessment.

Why is Explainability Important for Auditors?

For audit partners, managers, and seniors, explainability isn't just a technical feature; it's fundamental to the audit process. Without it, AI-driven insights can feel like a "black box," making it difficult to integrate them into audit evidence or explain them to clients. It helps auditors:

- Build Trust: Understand how a risk score was calculated, fostering confidence in the AI's output.

- Justify Findings: Clearly articulate the basis for identified risks to stakeholders and clients.

- Maintain Professional Skepticism: Challenge and validate the AI's conclusions rather than blindly accepting them.

- Improve Audit Quality: Use AI as a powerful tool to augment, not replace, human judgment.

Challenges with Traditional Risk Scoring

Traditional risk scoring methods often rely on manual processes, sampling, and subjective judgments, which can introduce inconsistencies and limitations. The sheer volume of data in modern enterprises makes it challenging for human auditors to identify all potential risks effectively. This is where AI technology steps in, but without explainability, it can create new challenges.

Limitations of Manual Risk Assessment

Auditors frequently face several pain points with manual risk assessment, which AI technology aims to address. These include:

a) Data Volume: Manually reviewing large datasets like general ledgers for anomalies is time-consuming and prone to human error.

b) Inconsistency: Risk scoring can vary across different audit teams or even individual auditors due to subjective interpretations.

c) Sampling Limitations: Relying on samples means potential risks in the unexamined population might be missed, leading to incomplete assurance.

d) Lack of Granularity: Traditional methods often provide high-level risk indicators without drilling down into specific transactional details.

The "Black Box" Problem in AI

While AI offers immense potential for audit automation, a common concern is the "black box" effect, where the AI provides an answer without revealing its underlying logic. This can be particularly problematic in audit, where transparency and justification are paramount. For example, an AI might flag an account as high-risk, but without knowing *why*, an auditor cannot effectively follow up or explain the finding.

Modern AI audit platforms, such as tools like Finspectors, are designed to mitigate this by providing detailed breakdowns of their risk assessments. They aim to show not just the "what" but also the "why" behind each risk signal, enabling auditors to confidently integrate AI insights into their workflow.

Key Features of Explainable Risk Scoring

Platforms that excel in explainable risk scoring offer specific features that empower auditors to understand and validate AI-driven insights. These features transform complex AI outputs into actionable intelligence, complementing auditor judgment rather than replacing it.

Components of Transparent Risk Scoring

When evaluating audit automation platforms, look for these critical components that contribute to explainable risk scoring:

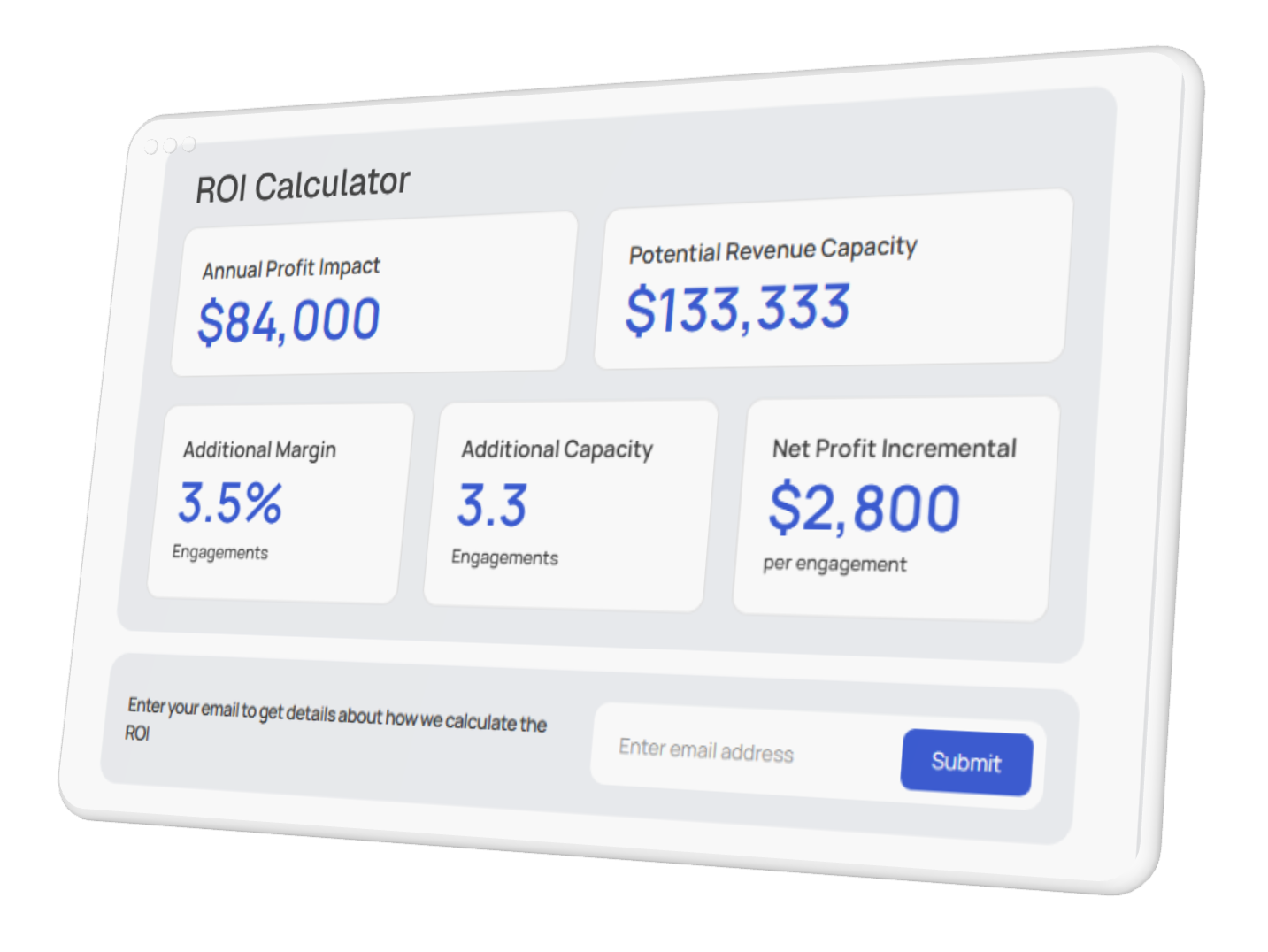

- Detailed Risk Signals: The platform should highlight specific data points or patterns that triggered a risk flag. For instance, identifying an unusual spike in vendor payments at period-end or a series of journal entries lacking proper approval.

- GL Analytics Breakdown: Visualizations and drill-down capabilities into general ledger data, showing how specific transactions or accounts contributed to the overall risk score. This might include identifying unusual GL patterns or significant deviations from historical norms.

- Structured Control-Point Logic: Clear articulation of the control points that were assessed and how their effectiveness (or lack thereof) influenced the risk score. This could involve checking for missing evidence or deviations from established policies.

- Interactive Risk Visualization: Dashboards that allow auditors to explore risk factors, adjust parameters, and see how changes impact the risk score in real-time.

How AI Technology Enhances Explainability

AI technology, when properly implemented, can significantly enhance the explainability of risk scoring by providing structured, data-driven insights. Instead of a vague "high risk" label, AI can pinpoint the exact anomalies. For example, an AI might identify a series of journal entries posted outside of business hours or a sudden increase in transactions with a newly registered vendor, providing concrete evidence for the auditor to investigate.

Platforms like Finspectors leverage AI technology to analyze vast amounts of data, identifying subtle risk signals that human auditors might miss. By integrating data-driven risk scoring, GL analytics, and document checks, these tools provide a comprehensive and transparent view of potential risks, allowing auditors to focus their efforts more effectively.

Description

Benefit for Auditors

Risk Signal Detail

Identifies specific data anomalies contributing to risk.

Pinpoints exact areas for investigation.

Flags journal entries with unusual amounts or descriptions.

GL Analytics Breakdown

Visualizes GL patterns and deviations.

Provides contextual understanding of financial data.

Highlights period-end spikes in specific expense accounts.

Control-Point Logic

Explains how control effectiveness impacts risk.

Validates control design and operating effectiveness.

Shows missing approvals for high-value transactions.

Interactive Visualization

Allows dynamic exploration of risk factors.

Empowers deeper analysis and scenario testing.

Adjusting materiality thresholds to see impact on risk.

Leading Platforms and Their Approaches

Several audit automation platforms are incorporating AI technology to enhance risk scoring, but their approaches to explainability can vary. Understanding these differences is key to selecting a platform that aligns with your audit methodology and transparency requirements.

Overview of Platform Capabilities

Many GRC and audit automation tools offer advanced risk scoring. For instance,AuditBoard allows users to "score risks using custom formulas that fit your business," which implies a degree of user-defined explainability. Similarly,Scrut offers customizable scoring systems, enabling organizations to tailor risk assessments to their specific needs.

Other platforms, like MetricStream, are noted for their "AI-powered risk identification" capabilities, as mentioned by Network Intelligence. While AI-powered identification is powerful, the crucial aspect for auditors is how transparently these insights are presented.

Examples of Explainable Features in Practice

Let's look at how some platforms approach explainability:

Finspectors:This platform focuses on providing granular detail, showing how GL analytics, document checks, and structured control-point logic contribute to a risk score. For example, it might highlight an unusual pattern in journal entries, such as a high volume of manual adjustments at month-end, and link it directly to a control weakness.

ServiceNow GRC:Known for its real-time risk scoring, ServiceNow integrates risk data across various modules. While it provides comprehensive risk dashboards, the level of explainability often depends on how the underlying risk models are configured and documented within the system.

AuditBoard:Their custom risk formulas allow auditors to define the parameters and weighting for risk factors. This inherent customization provides a degree of explainability, as the auditor understands the logic they themselves built into the system.

The best platforms don't just present a score; they provide a narrative. This narrative might include specific transaction IDs, dates, amounts, and the exact rules or models that triggered the risk flag. This level of detail is invaluable for audit teams.

Implementing AI for Enhanced Transparency

Integrating AI technology into your audit processes for enhanced transparency requires a thoughtful approach. It's not just about adopting a new tool, but about evolving your methodology to leverage AI's capabilities while maintaining the auditor's critical role.

Steps for Integrating Explainable AI in Audit

To successfully implement AI-driven audit automation with a focus on explainability, consider these steps:

Define Explainability Needs:Clearly articulate what level of detail and reasoning your audit team requires from AI-generated risk scores. What specific "risk signals" are most valuable?

Pilot Program:Start with a pilot program on a specific audit area or client to test the platform's explainability features in a controlled environment.

Auditor Training:Train your audit teams not just on how to use the platform, but how to interpret and validate the AI's explanations. This includes understanding the underlying AI technology and its limitations.

Continuous Feedback Loop:Establish a process for auditors to provide feedback on the AI's explanations, helping to refine and improve the system's transparency over time.

Best Practices for AI-Driven Workflows

When adopting AI technology for audit, especially for risk scoring, focus on workflows that augment human judgment. For instance, an AI-driven workflow might automatically analyze all general ledger transactions for unusual patterns, flagging potential outliers. An auditor can then drill down into these flagged items, using the AI's explanation to guide their investigation, rather than manually sifting through thousands of entries.

AI-driven audit platforms, such as Finspectors, complement auditor judgment by providing data-driven insights, allowing auditors to focus on higher-value activities that require critical thinking and professional skepticism. This includes reviewing complex transactions, assessing subjective estimates, and engaging in client discussions, all supported by robust, explainable AI analysis.

Benefits of Transparent Risk Assessment

Embracing audit automation platforms with explainable risk scoring offers significant benefits beyond just identifying risks. It leads to more efficient, consistent, and ultimately, higher-quality audits.

Improved Audit Efficiency and Accuracy

By providing clear explanations for risk scores, AI technology helps auditors quickly understand the root cause of potential issues, reducing the time spent on manual investigation. This leads to:

Faster Risk Identification:AI can process vast datasets much quicker than humans, flagging risks in real-time.

Targeted Testing:With explainable risk scoring, auditors can focus their testing procedures on the specific areas highlighted by the AI, rather than broad, less efficient sampling.

Reduced False Positives:When the AI's reasoning is clear, auditors can more easily distinguish between true risks and benign anomalies, improving accuracy.

Consistent Application:AI applies rules and models consistently, leading to more uniform risk assessments across different audits and teams.

Enhanced Stakeholder Confidence

Transparent risk assessment builds trust not only within the audit team but also with external stakeholders, including management, audit committees, and regulators. When auditors can clearly articulate the basis for their findings, it demonstrates a rigorous and data-driven approach to assurance. This can be particularly important for compliance audits, where demonstrating adherence to regulations is paramount, as highlighted by resources like Vanta and Scrut.

For example, if an AI flags an unusual vendor payment, the ability to show the exact GL entries, the vendor's history, and the specific risk model that triggered the alert provides concrete evidence that strengthens the audit's credibility.

Future of AI in Audit Automation

The role of AI technology in audit automation is continuously evolving, with a clear trend towards even greater explainability and integration. As AI models become more sophisticated, the demand for transparent insights will only grow, shaping the next generation of audit platforms.

Emerging Trends in Explainable AI

We're seeing several exciting trends that will further enhance explainable risk scoring in audit:

Contextual Explanations:AI systems will provide explanations tailored to the auditor's specific query or context, making them even more relevant and actionable.

Causal Inference:Future AI models may move beyond correlation to identify causal relationships between events and risks, offering deeper insights into root causes.

Human-in-the-Loop AI:Increased collaboration between auditors and AI, where auditors can easily provide feedback to refine AI models and their explanations in real-time.

Standardized Explainability Metrics:Development of industry standards for measuring and comparing the explainability of different AI systems.

The Evolving Role of the Auditor

As AI technology takes on more of the repetitive, data-intensive tasks, the auditor's role will shift towards higher-level analysis, critical thinking, and strategic advisory. Auditors will become "AI interpreters," leveraging explainable AI to gain deeper insights and communicate complex findings more effectively. This means a greater focus on:

Interpreting AI Outputs:Understanding the nuances of AI-generated risk signals and their implications.

Validating AI Models:Ensuring the AI models are fair, unbiased, and accurately reflect the audit objectives.

Strategic Risk Assessment:Using AI insights to inform broader strategic risk assessments and provide more valuable recommendations to clients.

Ethical AI Oversight:Ensuring the responsible and ethical use of AI in audit, particularly concerning data privacy and bias.

Platforms like Finspectors are at the forefront of this evolution, providing tools that empower auditors to embrace AI technology as a powerful ally, ensuring that audit remains a profession of judgment, supported by intelligent automation.

Conclusion

Choosing an audit automation platform with the most explainable risk scoring is paramount for modern audit teams. It's not enough for AI technology to simply identify risks; auditors need to understand the underlying rationale to effectively exercise professional skepticism, justify findings, and build trust with stakeholders. Platforms that offer detailed risk signals, GL analytics breakdowns, and structured control-point logic, such as Finspectors, empower auditors to leverage AI as a powerful complement to their judgment.

As the audit landscape continues to evolve, the demand for transparent, data-driven insights will only grow. By prioritizing explainable AI, audit firms can enhance efficiency, improve accuracy, and elevate the overall quality of their assurance services, ensuring they remain at the forefront of the profession.