TL;DR

Sampling misses low-frequency but high-impact issues. Real-time analytics scan full populations and surface exceptions early.

Start with reliable data and clear audit assertions. Then pick simple rules or ML only where it helps.

Treat every alert as a hypothesis. Investigate to resolution and document an evidence packet another auditor can reperform.

Prove value with four metrics: coverage, exception precision, cycle time, and detection rate.

Why sampling breaks at modern scale

Audit populations have grown faster than audit hours. A five percent sample can miss rare but material problems such as one-time vendor switches, end-of-period adjustments, and small multi-step schemes. Real-time auditing shifts from occasional testing to continuous scanning, which improves both the timing and the quality of evidence.

What real-time auditing actually means

Real-time does not mean constant firefighting. It means the audit team can ingest data frequently, apply defined checks on full populations, route exceptions to reviewers, and retain a transparent trail from alert to conclusion. The goal is persuasive evidence that arrives sooner and is easier to defend.

A repeatable workflow you can trust

Define the objective Name the assertion and risk. Example: Completeness of payables or existence of revenue.

Trace data lineage Record sources, time windows, joins, filters, and any manual steps. Reconcile to system totals.

Evaluate reliability Test controls over information produced by the entity or reperform key extractions before relying on reports.

Design the method Choose statistical rules or ML that respond to the specific risk. Set thresholds tied to materiality. Define exception categories.

Run, triage, and investigate Prioritise by risk, obtain corroboration, and move items to a clear disposition such as valid, error, or policy breach.

Conclude and document Produce a short evidence packet: objective, lineage, parameters, results, investigation notes, and the final conclusion.

Methods that work in practice

Deterministic rules Duplicate detection, mismatched fields, round-value spikes, weekend postings, and user-role anomalies. These are fast, explainable, and strong for re-performance.

Statistical checks Z-scores, interquartile ranges, and ratio expectations by segment. Good for outliers and unusual shifts.

Machine learning Isolation Forest for single-row outliers in complex data, Local Outlier Factor for deviations within peers, and simple autoencoders where structure is high dimensional. Use ML when rules and basic stats miss meaningful patterns. Keep parameters documented and stable.

Where continuous analytics pay off

Payables and vendors Duplicate invoices, sudden vendor spikes, bank detail changes, and split invoices below approval limits.

Journal entries Odd-hour postings, unusual GL pairings, and users with low historic activity making high-impact entries.

Revenue and receivables Return bursts near close, unnatural price or discount patterns, and regional outliers that conflict with history.

Access and configuration Rapid permission changes, failed login streaks, and sensitive setting edits in short windows.

Common pitfalls and how to avoid them

Unreliable inputs Dashboards or exports used without testing completeness and accuracy. Reconcile totals and reperform key steps before reliance.

Method mismatch An impressive model that does not address the assertion. Start with the risk, then select the technique.

Alert fatigue Too many low-quality exceptions. Segment populations, tune thresholds, and combine rules with a second pass that raises precision.

Thin documentation Steps recorded but no conclusion. Always link results to the assertion and materiality.

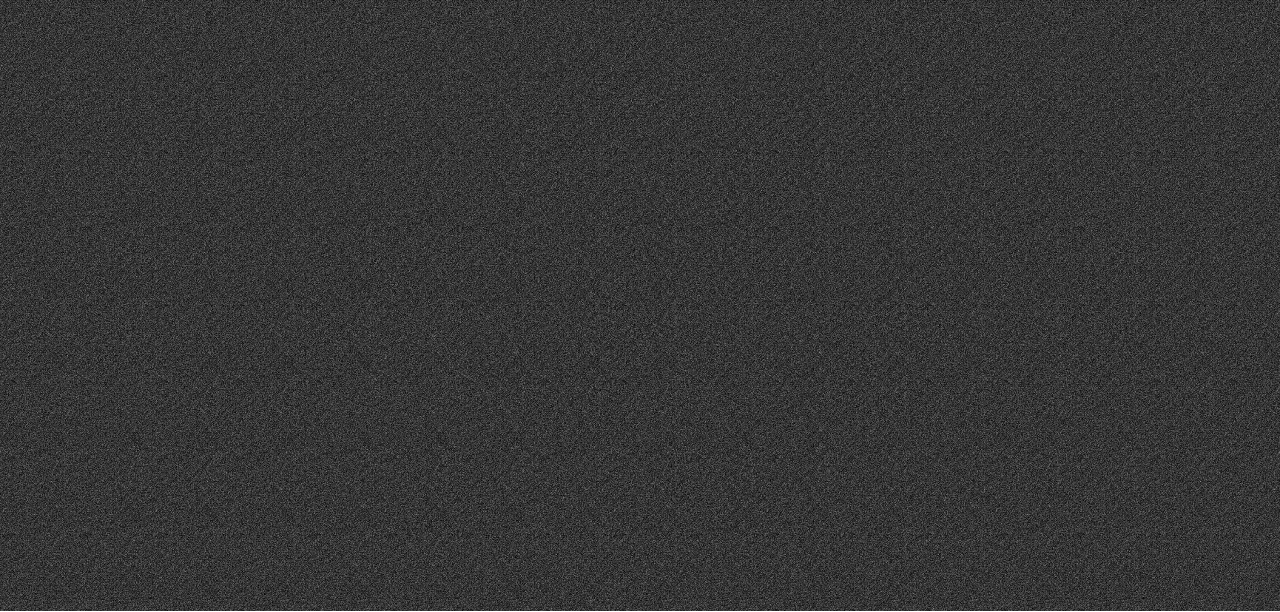

Proving value without hype

Measure outcomes on every engagement:

Audit coverage ratio Analyzed transactions divided by total transactions, expressed as a percentage.

Exception precision Valid issues divided by total exceptions reviewed.

Cycle time Days from data receipt to conclusion on significant assertions.

High-risk detection rate Valid high-risk findings divided by known or subsequently confirmed high-risk issues.

These measures show whether analytics improve quality and speed without making unsupported promises.

Bottom line

Real-time auditing is not a switch you flip. It is a disciplined way to combine reliable data, targeted methods, responsible thresholds, and clear documentation. Done well, it moves the team beyond sample dependence, raises the quality of evidence, and helps clients act sooner on the issues that matter.